Reviews in the AI Era: ChatGPT, Gemini, Perplexity

Continue the Reviews & Reputation series with Part 7 of 8.

You can have the best review acquisition system, the cleanest response framework, the most sophisticated automation, and the deepest multi-platform AI optimization - and still fail if your team does not execute consistently. Systems do not generate reviews. People do, supported by systems. The 3-layer Review-First Culture Stack is the human infrastructure that turns review systems into daily team habits: a Leadership Layer where owners model the behavior and make reviews visible at the top, a Process Layer of role-play training, the Authentic Ask framework, and workflow integration that removes awkwardness, and a Recognition Layer that celebrates the asks (not just the results) through technician spotlights, visible team metrics, and bonus structures designed without violating platform TOS. Most review training fails because it happens once, uses awkward scripts, and lacks ongoing accountability. The fix is cultural, not procedural - and this article covers the framework to build it. As the series finale, Part 8 ties together the systems from Parts 1 through 7 with the operational reality of getting a real contracting team to run them consistently across hundreds of jobs per month.

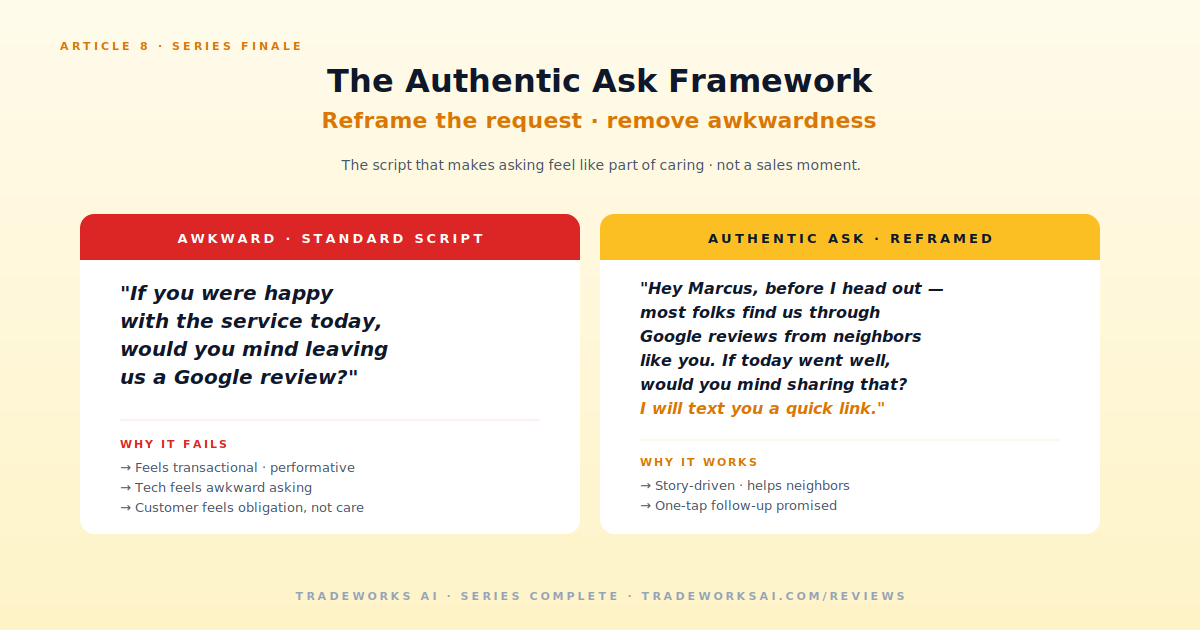

Most contractor review training looks like this. The owner reads an article about reviews. They schedule a 30-minute team meeting. They hand out a script: "If you were happy with the service today, would you mind leaving us a Google review?" They emphasize the importance. Everyone nods. The next 30 days produce a small bump in reviews. After that, decay. Six months later the team is asking less than they did before the training.

One-time training. Behavior change requires repetition. A single session does nothing.

Awkward scripts. The standard "would you mind leaving a review" feels transactional and performative. Both the tech and the customer feel it.

No follow-up or reinforcement. Without weekly visibility on who is asking and who is converting, there is no accountability.

No accountability or metrics. If you cannot see who asked on which jobs, you cannot improve the system or the people in it.

Owner does not model the behavior. If the owner does not ask customers when on a job site, the team learns it is not actually important.

A culture that produces consistent reviews without awkwardness has three layers operating together. Missing any one of them produces the decay pattern above.

Reviews start at the top. Owner and manager behavior signals what actually matters in the business. Three leadership behaviors that build the culture.

Visible priority. Reviews show up in every team meeting. Reviews from the previous week are read aloud. Mentions of specific technicians are highlighted. The metric is on the wall, not buried in a spreadsheet only the owner sees.

Personal modeling. The owner asks for reviews when on customer-facing jobs. New hires watch this and learn the standard. If the owner only delegates the asks but never makes them, the team learns the asks are optional.

Negative review handling. When a 1 or 2-star review comes in, the owner addresses it as systemic learning, not technician blame. Teams that fear retaliation hide bad reviews instead of surfacing them for resolution. Teams that trust the response process flag concerns early.

The training methods, scripts, and workflow integration that remove awkwardness from the actual review request. This is where the Authentic Ask Framework lives.

Authentic Ask Framework (covered in detail below) - reframes the request from a separate sales moment into part of caring about the customer experience.

Role-play training - team members practice the ask on each other before doing it on customers. Recording the best examples builds a team library new hires reference.

Workflow integration - the ask is built into the job-completion routine, not bolted on as a separate step. Customer signs the invoice, tech walks through the work, tech makes the ask, customer receives the SMS link from the automation.

What gets celebrated gets repeated. The recognition layer is what makes the behavior stick over months and years.

Technician spotlights - reviews mentioning specific techs by name get read in team meetings. Tech of the Month based on review mentions, not just job count. The recognition is public and specific.

Visible team metrics - review count, review conversion rate per tech, review mentions per tech. Posted in the office where everyone sees them. Healthy peer comparison without shaming.

Bonus structures designed without violating platform TOS - bonus for ask completion (not for review received). Bonus for review velocity at team level. Bonus for specific recognition in reviews. Never tie compensation directly to receiving reviews - this violates Google policy and creates the wrong incentive.

The single most consequential element of Layer 2 is how the ask itself sounds. Most review request training fails because the script makes both the tech and the customer feel awkward. The Authentic Ask Framework removes the awkwardness by reframing what the ask actually is.

Why it fails: it sounds like a sales close. The customer feels sold. The tech feels they are asking for something. Both parties are uncomfortable. Conversion stays in the 3-8 percent range.

"Mr. Hernandez - I want to make sure we did right by you today. The way we know is when our customers tell us. Would you have 30 seconds to share your experience? I will text you a link before I leave."

HVAC after seasonal maintenance: "Mrs. Thompson, your system is ready for summer. The way I get to keep doing this work is when customers tell others about us. Would you mind sharing what you experienced today? I will send you the link."

Plumbing after emergency repair: "Mr. Garcia, water is back on. I know it has been a long night. When you get a chance tomorrow, if you would not mind sharing how it went, that helps the next family who is in the same situation."

Cleaning recurring service: "Same time next week? Hey - if our team is making the difference for you, the highest compliment is when you tell other people. Would you mind taking a minute to share?"

Electrical large project completion: "Panel upgrade is done, code-compliant, inspector should pass it. Word of mouth is how we keep getting work in this neighborhood. If you would not mind sharing what the project was like, that means a lot."

Three training formats that produce sustained behavior change vs. the one-time-meeting failure pattern.

Team members pair up. One plays customer, one plays technician. Run the Authentic Ask three times in different scenarios. Owner observes and gives feedback. Repeat monthly with new scenarios.

Why it works: the awkwardness lives in the unfamiliar muscle memory of saying the words. Practice removes that. Techs who have role-played the ask 30 times do it without thinking on real jobs.

Best asks from the team get recorded (with customer permission) and added to a training library. New hires watch the library before going into the field. Existing team members reference it when asks feel stale.

15-30 minute team meetings reviewing the past week. Reviews read aloud (especially mentions). Conversion rates discussed. Any negative reviews addressed as systemic learning. Brief celebration of wins. Adjustments for the next week.

Why it works: weekly cadence keeps reviews top-of-mind. Public read-alouds reinforce specificity by example. Issues surface early instead of festering.

The recognition layer is where well-intended programs go wrong. Five recognition tactics that work and five that backfire.

Technician of the Month based on review mentions and conversion rate. Public recognition with specific stories.

Customer mentions read aloud in meetings. The technician hears specifically what the customer said.

Team review board visible in office. Daily/weekly count, monthly trending.

Bonus tied to ask completion (verified through CRM logging) rather than reviews received. Removes the wrong incentive while still rewarding the behavior.

Quarterly bonus tied to team-level review velocity. Aligns individual behavior with team outcome.

Direct pay-per-review. Violates Google and Yelp policy. Creates incentive to fabricate reviews or pressure customers. Long-term damage to platform standing.

Punishing low performers publicly. Creates fear and review hiding. Trust collapses across the team.

Generic gift cards with no context. Recognition without story is forgettable and feels transactional.

Ranking systems that produce one winner and many losers. Review culture is collaborative, not zero-sum.

Asking techs to drive review acquisition without giving them visibility on results. They cannot improve what they cannot see.

Even with the Authentic Ask framework, technicians raise predictable objections. Each has a response.

The honest objection. Solution: workflow integration. The ask becomes part of the job-completion checklist in the FSM. CRM logs whether the ask happened. Reminder system if the box is unchecked.

The Authentic Ask Framework + role-play addresses this directly. Reframe the ask as caring about the customer experience. Practice until the words feel natural.

The data contradicts this. Part 2 covered the conversion math: contractors with the 5-Pillar System hit 15-30 percent conversion. The customers who say no have already self-selected. The customers who say yes do it because the ask was authentic, not because the tech was special.

Cultural alignment conversation, not a training issue. Reviews are not a marketing extra - they are how the business gets next jobs that pay the techs. The ask is part of the customer relationship, which is part of the work itself.

The NPS gate from Part 6 handles this. Detractors get private resolution, not public review requests. Techs do not need to evaluate customer satisfaction in the moment - the workflow does it after. Trust the system.

Review culture is taught fastest during the first 30 days. New hires arrive without bad habits and absorb the standard you set.

Day 1: Review culture training as a primary onboarding element. Why reviews matter, the 3-layer culture, the Authentic Ask Framework, role-play with experienced tech.

First 10 jobs: paired with experienced tech who models the ask. New hire observes for first 5 jobs, makes the ask for next 5 with experienced tech present.

First 30 days: tracked. Number of asks made, conversion rate, customer feedback. Owner reviews in 30-day check-in.

30/60/90 day reviews: review culture is a discussion topic in each, not just a one-time training.

Cultural infrastructure runs on cadence. Without rhythm, behavior decays.

Daily: ask happens on every job. Logged in CRM/FSM. Detractors flagged for owner attention same day.

Weekly: 15-30 minute team meeting. Reviews read aloud, mentions celebrated, conversion rates shared, issues addressed.

Monthly: Tech of the Month recognition. Performance metrics across the team. New hire ramp tracked. Process adjustments based on data.

Quarterly: Cultural health review. Are we hiring people who buy into the culture? Are bonus structures producing the right behaviors? Is recognition still landing or has it gone stale?

Percent of jobs with documented review ask. Target: 90 percent or higher. Below 70 percent means workflow integration broke.

Review conversion rate by technician. Target: 15-30 percent per tech with the 5-Pillar System. Outliers high or low get attention.

Tech mentions in review content. Target: 30 percent of reviews name a specific tech. The specificity signal from Part 7.

Team meeting attendance and engagement. If attendance drops, culture is decaying.

New hire ramp time to first ask. Target: by job 5. Longer ramp times indicate training gaps.

Eight articles. One framework. Reputation as the highest-leverage marketing asset contractors have in 2026.

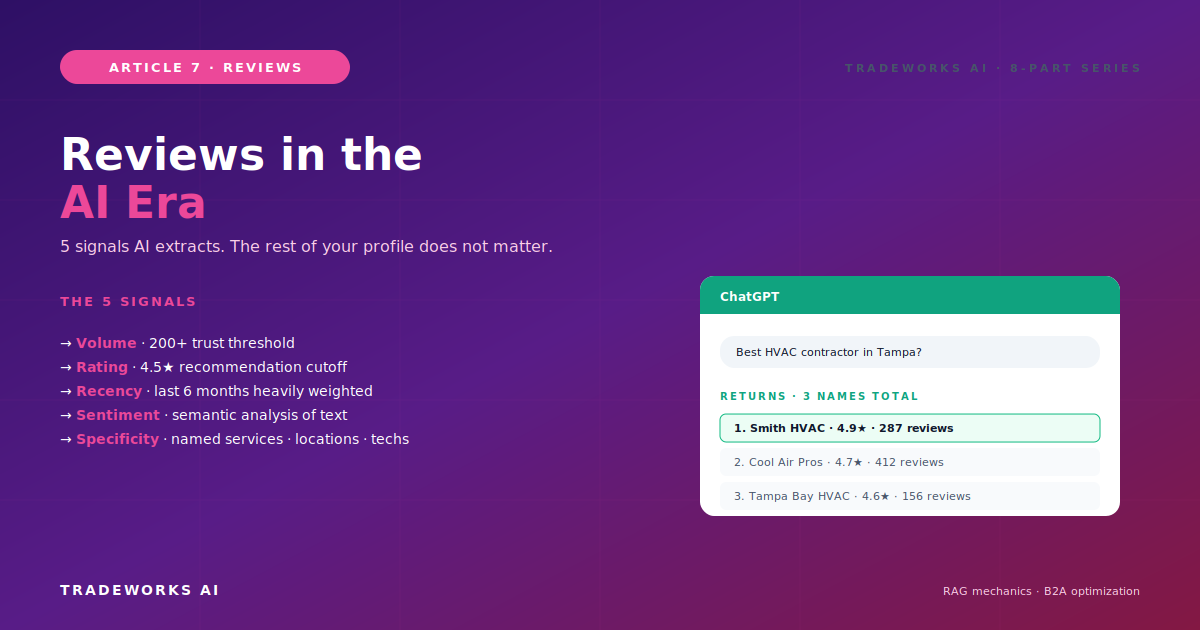

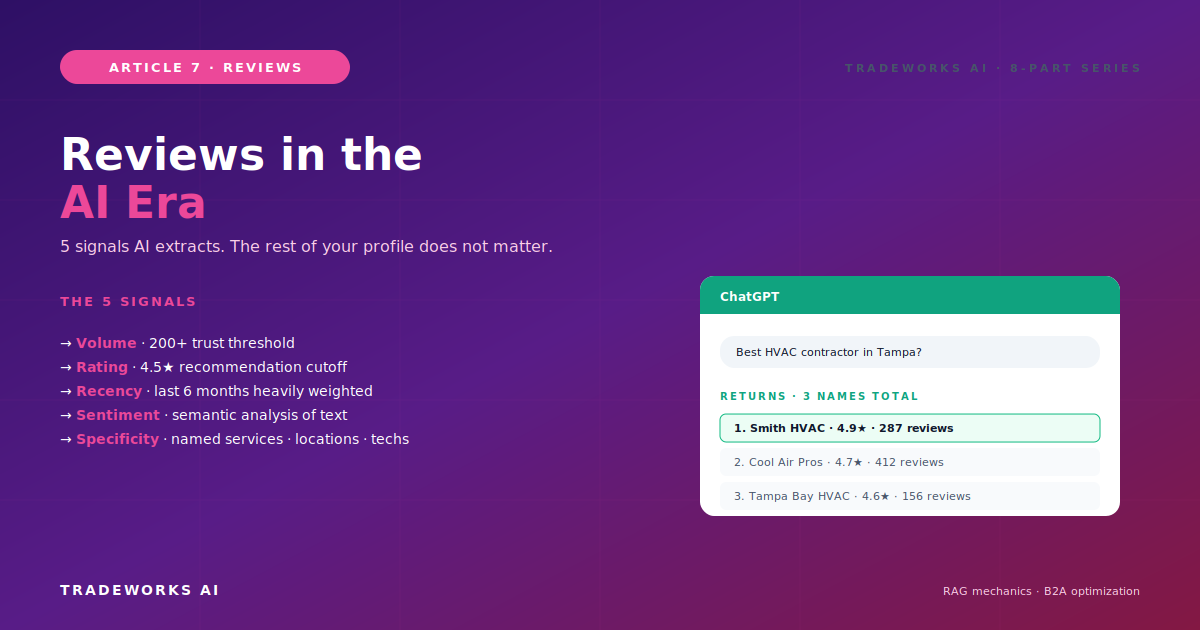

Part 1 established the business case - Three Returns (local search, conversion, AI visibility), the 4.5-star threshold, the 200-review AI threshold.

Part 5 expanded multi-platform - Tier System across Google, Facebook, Yelp, Angi, BBB, Nextdoor.

Part 6 automated the architecture - 5-Layer system across FSM-native, standalone, and DIY tiers.

Part 7 mapped the AI era - 5 AI Review Signals, B2A reality, 73 percent vs 31 percent conversion.

Part 8 built the cultural infrastructure - the Review-First Culture Stack that turns systems into team habits.

Together, the eight articles provide the full operating model for contractor reputation in 2026 and beyond. Systems matter. Frameworks matter. Tools matter. But none of it works without the cultural infrastructure that gets a real team to execute consistently across hundreds of jobs per month.

The contractors who build this culture in 2026 lock in compounding advantages that extend through 2028 and beyond. The cost of waiting is rising every quarter. Lets build something.

Three layers operating together. Leadership: owner asks customers personally and makes reviews visible at the top of every team meeting. Process: Authentic Ask Framework + role-play + workflow integration so the ask is built into job completion, not bolted on. Recognition: celebrate the asks (not just the results), Tech of the Month based on review mentions, visible team metrics. Missing any one of the three produces the decay pattern most contractors experience.

Bonus tied to ask completion (verified through CRM logging) rather than reviews received. This rewards the behavior you want without creating incentive to fabricate reviews. Quarterly bonuses tied to team-level review velocity also work. Never tie individual compensation directly to receiving reviews - this violates Google and Yelp policies and creates the wrong incentive structure.

30 days for initial setup (training, role-play, workflow integration). 90 days for behavior to feel natural for the team. 6 months for the culture to be self-sustaining without daily owner reinforcement. Plan for 12 months of dedicated cultural work before treating it as autopilot.

Resistance usually maps to one of two issues. Awkwardness about the ask itself - solved by the Authentic Ask Framework + role-play. Disconnection from why reviews matter - solved by Leadership Layer (owner modeling, visible priority, tying reviews to business outcomes the team cares about). Persistent resistance after both interventions usually indicates a hiring or cultural alignment issue that goes beyond review training.

Not as a first move. Review asks are a learnable behavior, not a personality trait. Investigate why the asks are not happening: workflow gaps, awkwardness, lack of training, or genuine cultural misalignment. The first three are coachable. The fourth requires honest conversation about whether the role is the right fit. Termination is the rare exception, not the standard response.

Pattern recognition matters. One or two negative reviews mentioning a tech is normal variance. Multiple negative reviews mentioning the same tech is a coaching opportunity, possibly an HR conversation. The data is feedback - use it for development, not for shaming. If the pattern persists after coaching, the tech may not be a fit for customer-facing work even if their technical skills are strong.

Most contractors think their review profile is "fine." Then we benchmark it against the local competitors who are taking the AI recommendation slots. Our free audit checks volume, recency, rating, response rate, and platform coverage — and shows you the highest-leverage moves to get to 4.7+ stars and 200+ reviews this year.

Continue the Reviews & Reputation series with Part 7 of 8.

Continue the Reviews & Reputation series with Part 1 of 8.