Review Automation: FSM Workflows on Autopilot

Continue the Reviews & Reputation series with Part 6 of 8.

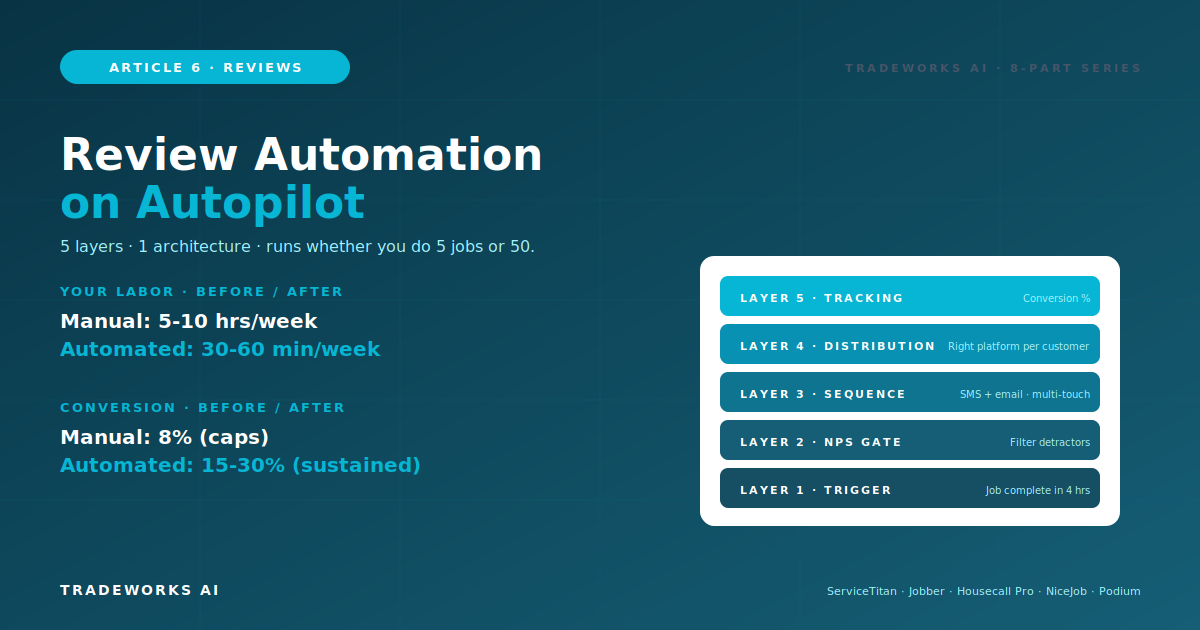

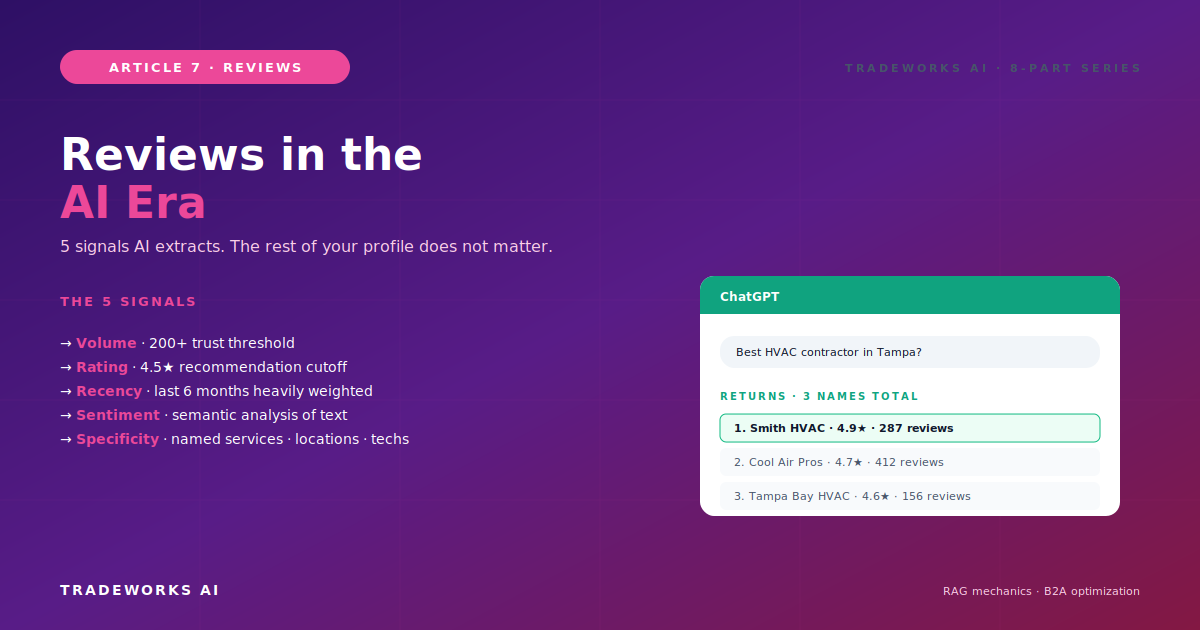

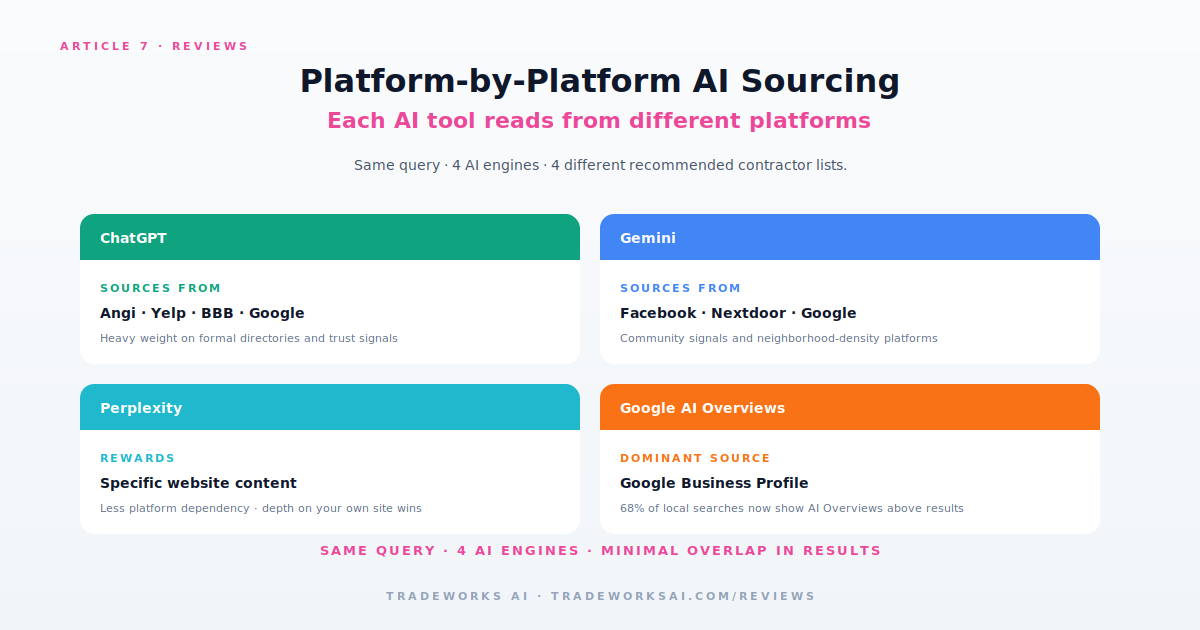

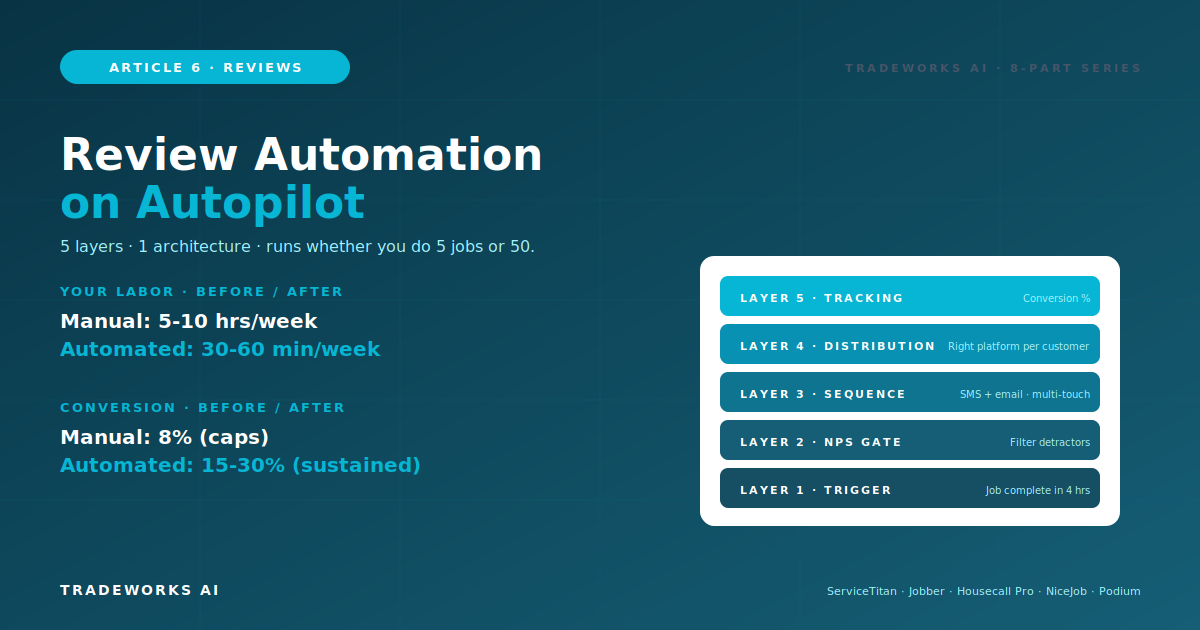

AI search tools extract five specific signals from your review profile when deciding whether to recommend you to a homeowner: volume (with a 200-review threshold for established trust), rating (4.5 stars as the recommendation cutoff), recency (last 6 months heavily weighted), sentiment (semantic analysis of review text, not just star ratings), and specificity (named services, locations, technicians, and outcomes). The 5 signals operate together - missing any one of them caps your AI visibility regardless of how strong the others are. ChatGPT extracts these signals from Angi, Yelp, BBB, and Google primarily. Gemini pulls from Facebook and Nextdoor. Perplexity rewards depth on your own website over platform reviews. Google AI Overviews use Google Business Profile as the dominant source. AI-referred leads convert at 73 percent compared to 31 percent for Google organic leads, because the homeowner already trusts an AI-vetted recommendation when they pick up the phone. This article covers how AI systems actually read reviews using RAG mechanics, the 5 signals AI extracts, the platform-specific behaviors that determine which contractor gets named where, why specificity in review content drives more AI citations than generic reviews, and the practical tactics that move your business into the small share of contractors AI tools actually recommend.

Right now, somewhere in your service area, a homeowner just opened ChatGPT on their phone. Their water heater failed. They typed: best plumber near me for tankless water heater install in Tampa.

ChatGPT thought for three seconds. Then it gave them three names. Three. Total. Out of every plumber in the entire metro area.

She picked one. She called. She converted at a rate four times higher than any Google lead because the AI already vetted the choice for her. She never opened Google. Never saw your ads. Never visited the map pack. And if your business was not one of those three names ChatGPT gave her, you never had a chance.

This is not an edge case anymore. 45 percent of consumers now use AI tools to find local services, up from 6 percent in 2025. 1 in 3 homeowners under 45 used an AI assistant to find a home service provider in the past 90 days. 41 percent of consumers trust AI recommendations for local services as much or more than personal referrals - up from 12 percent in 2024.

The trust curve is steep and accelerating. The contractors who understand how AI tools read reviews and optimize accordingly are the ones being recommended. The contractors who treat reviews as a 2018 SEO play are invisible to the fastest-growing customer discovery channel in contractor history.

Most contractors imagine AI search as a faster Google. The mental model is wrong. AI tools do not match keywords against indexed pages - they use Retrieval-Augmented Generation (RAG) to synthesize recommendations from multiple sources in real time.

The simplified version: when a homeowner asks ChatGPT for a plumber, the AI breaks the query into half a dozen sub-queries. It pulls candidates from independent sources - review platforms, websites, news mentions, directory listings. It reads what those sources say about each candidate. Then it synthesizes a 2 or 3 contractor recommendation with reasons attached.

First: the AI reads review *content*, not just review *count*. The actual words customers wrote about your business become the basis for the recommendation reasoning the AI generates.

Second: recency matters more than it does for Google. The AI is trying to answer is this business currently active and operating well? Old reviews answer a different question. Algorithms that need to recommend a contractor right now down-weight stale data more aggressively than Google does.

Third: the AI cross-references multiple sources. A profile that looks strong on Google but contradicted on Yelp triggers uncertainty. A profile that reads consistent across Google, Facebook, and BBB reads as verified. Cross-platform consistency is itself a signal.

AI systems extract five specific signals from your review profile when deciding whether to include you in a recommendation. Each signal operates independently - weakness in any one of them caps your AI visibility regardless of how strong the others are.

Review count signals established trust. The threshold is 200-plus reviews for AI recommendation eligibility in 2026. Below 200, the recommendation calculation defaults to higher-volume competitors regardless of rating.

Why 200? Sample size validity. AI systems need enough data points to be confident the rating is statistically meaningful. A 4.9-star average from 50 reviews could plausibly come from selective request patterns. A 4.6-star average from 300 reviews could only come from a high-activity business with broad customer exposure.

The threshold compounds. Once you cross 200, AI tools start surfacing you in recommendations. Each recommendation generates more queries that find your profile. Citation history hardens. The contractors who cross 200 in 2026 will likely own the recommendation slots through 2028.

Average star rating. The threshold is 4.5 stars. Below 4.5, AI recommendations drop dramatically. Above 4.5, the difference between 4.6 and 4.9 is small.

Why 4.5? Both human consumers and AI systems treat 4.5 as the cutoff between trusted and questionable. A 4.4-star business looks like it has unresolved issues. A 4.5-star business looks like a normal business with normal customer experiences. The half-star difference produces an outsized perception swing.

Part 1 in this series covered the 4.5-star threshold in depth. The same dynamic plays out in AI recommendation logic.

Reviews from the last 6 months are heavily weighted. Reviews older than 12 months contribute minimally to current AI recommendations.

A contractor with 500 reviews from 2019-2023 and zero reviews in the last 12 months is almost as invisible to AI recommendations as a contractor with 30 reviews from the last 6 months. The 30-recent contractor often gets recommended ahead of the 500-stale contractor for high-intent queries.

Practical rule: aim for at least 5 to 10 new reviews per month minimum to maintain recency signals. More if you operate in competitive markets where AI recommendations are contested.

AI systems analyze review text for sentiment, not just star rating. A 4-star review with strongly positive language can carry more recommendation weight than a 5-star review with terse content.

Sentiment analysis pulls out enthusiasm, specific positive language, and emotional engagement. Reviews that say excellent or amazing or saved us during the heat wave score higher in sentiment analysis than reviews that say good or okay or did the job.

What this means for review acquisition: the customer experience matters more than just the star count. Customers who feel strongly positive write reviews with strongly positive language. Customers who are mildly satisfied write generic reviews regardless of star rating.

This is the signal most contractors do not optimize for - and the one that produces the most AI citation lift.

AI systems prefer reviews with specific details: named services, named technicians, named neighborhoods, specific outcomes, specific timeframes. These reviews get cited verbatim in AI-generated recommendations because they provide reasoning the AI can extract.

Specific review: Tony replaced our AC capacitor in 90 minutes during the Tampa heat wave last week. Showed up on time, explained the issue, fair price. We will call again.

The second review gives the AI six different facts to cite: technician name, specific service, time-to-completion, weather context, location reference, customer behavior signal. AI tools cite this kind of review verbatim when generating recommendation reasoning.

How to encourage specificity without scripting (which violates platform TOS): in your review request message, ask a guiding question. Examples: What did our team do that stood out? What was the outcome? Would you mention the technicians name? These prompts produce more specific reviews without dictating content.

Different AI tools source from different review platforms. Same query, four AI tools, four different contractor lists. Part 5 covered the platform tier system. Part 7 goes deeper on what each AI does with reviews from each source.

Primary review sources: Angi, Yelp, BBB, Google. ChatGPT integrates directly with Angi data. It cross-references Yelp content with Google reviews to verify consistency. It uses BBB for trust verification, particularly on higher-ticket services.

Specific behavior: ChatGPT favors national franchises like Roto-Rooter, Benjamin Franklin Plumbing for emergency searches. Local independents need stronger signals across all five review categories to compete with franchise brand recognition.

Optimization priority for ChatGPT visibility: Google primary, Angi secondary if you participate in Angi lead-gen, BBB accreditation if you handle high-ticket services.

Primary review sources: Facebook, Nextdoor, plus broader web verification. Gemini specifically pulls from social platforms that other AI tools largely ignore.

Specific behavior: Gemini weights neighborhood-level recommendations heavily. A contractor mentioned positively in Nextdoor neighborhood threads gets recommended for queries from that geography even if other signals are thinner.

Optimization priority for Gemini visibility: Facebook business page with active reviews, Nextdoor business page or active personal account engagement, neighborhood-specific content on your website.

Primary signal: your own website depth. Perplexity rewards specific, well-built websites more than any other AI tool.

Specific behavior: Perplexity cited 17 sources for a single AC repair query in one test, including direct links to 14 local HVAC company websites. The platform-review weight is lower because Perplexity prefers original-source content.

Optimization priority for Perplexity visibility: deep service pages on your website, FAQ content matching real homeowner queries, schema markup, and original content depth.

Primary review source: Google Business Profile. The closest AI tool to traditional local SEO logic.

Specific behavior: AI Overviews now appear in 68 percent of local searches and 92 percent of informational local queries. The map pack only shows up for 39 percent of searches. The AI summary is increasingly the only result customers see.

Optimization priority for Google AI Overview visibility: maximum optimization of Google Business Profile, full schema markup on your website, FAQ content that maps to AI Overview question patterns.

Expected primary review sources: Yelp (Apple Maps integration), Apple Maps reviews directly, plus broader web verification.

Specific behavior: still being defined as the platform matures. Early indications suggest heavy weighting on Apple Maps presence and Yelp consistency.

Optimization priority for Apple Siri visibility: Apple Maps business profile claimed and complete, Yelp presence even with passive acquisition, NAP consistency across iOS-relevant directories.

The specificity signal deserves its own section because it is the single highest-leverage tactic for AI visibility that most contractors do not actively pursue.

When ChatGPT generates a contractor recommendation, it does not just say this contractor has good reviews. It says something like: This company is highly rated for same-day emergency service. Or: This contractor has consistent positive reviews mentioning water heater installation specifically.

Where does the AI get those specific reasons? From the actual content of customer reviews. The recommendation reasoning gets pulled directly from review text.

A profile of 200 reviews that all say great service! gives the AI nothing to cite. A profile of 200 reviews with 60 percent including specific service mentions, neighborhood names, and outcome details gives the AI rich material to construct compelling recommendation reasoning.

Tactical implementation: in your review request workflow, include a guiding prompt that encourages specificity without dictating content. Examples that work: What did our team do that stood out? What was the project and how did it turn out? Would you mention which technician helped you?

These prompts produce reviews that average 60-90 words instead of 15-30 words and contain 3-5 specific facts the AI can extract. The increase in AI citation rate is dramatic - estimated 2-3x more frequent recommendation surfacing for businesses with high-specificity review profiles.

When a contractor crosses the threshold for AI recommendation eligibility (200-plus reviews, 4.5-plus rating, recent activity, specific content), the AI starts surfacing them in recommendations. Each recommendation generates queries that find their profile. Citation history grows. The AI gets progressively more confident in the existing recommendation.

This produces a winner-takes-most dynamic. The contractors who cross the threshold first lock in citation slots that compound over time. Late entrants face increasing difficulty displacing entrenched recommendations because the AI defaults to the recommendation history it has built.

The 1.2 percent of local businesses recommended by ChatGPT today (per SOCi 2026 Local Visibility Index) is not a fixed list - but it is hardening fast. The contractors who own the citations in 2026 are likely to own the recommendations through 2028 the way local pack winners owned Google through the 2010s.

Practical implication: the cost of waiting is rising fast. Contractors who start their AI optimization in 2026 will face dramatically higher difficulty in 2027 because competitors who started earlier will have hardened citation positions.

Cross the volume threshold. Run the 5-Pillar Acquisition System from Part 2 to add 100-plus reviews in 90 days. Get to 200 total minimum.

Maintain rating above 4.5. The Quality Gate from Part 2 protects rating by filtering detractors from public review requests.

Sustain recency. 5-10 new reviews per month minimum. Active acquisition forever, not a one-time push.

Encourage specificity. Update your review request prompts to ask guiding questions. Average review length should grow from 15-30 words to 60-90 words.

Distribute across platforms. Article 5s tier system covers which platforms to expand to per trade. ChatGPT visibility requires Angi/Yelp/BBB. Gemini visibility requires Facebook/Nextdoor.

Optimize responses for AI extraction. Article 3s R-A-T-E framework includes service keywords and locations. AI tools crawl responses too.

B2A (business to agent) is the present. Customers ask AI for recommendations. AI surfaces 2-3 contractors. Customer calls one.

A2A (agent to agent) is the very near future. The customer is no longer asking AI for a recommendation - the customer delegates the entire booking workflow to an AI agent. The customers AI checks availability, books the appointment, handles the payment.

In an A2A world, your AI voice agent (Cat 10 Part 2 in this librarys series) is no longer the only AI on the phone - it is talking to another AI on the customer side. Reviews remain the dominant trust currency in this dynamic. The customers AI selects providers based on the same 5 signals weighted even more heavily because there is no human override in the selection.

The contractors building review profiles strong enough to be recommended by AI today are building infrastructure for the agent-to-agent commerce of 2027-2028.

Reviews are no longer just a Google ranking factor or a conversion lever. They are the trust infrastructure of AI search. The 5 signals AI extracts are the new map of what reviews actually do for your business.

Volume gets you eligible. Rating gets you recommended. Recency keeps you recommended. Sentiment makes the recommendation enthusiastic. Specificity makes the recommendation reasoning compelling enough to drive the call.

Contractors who optimize for all five signals lock in AI recommendation positions that compound over years. Contractors who treat reviews as a 2018 marketing tactic become invisible to 45 percent of consumers and rising.

Read Part 8 next: Building a Review-First Culture - Training Your Team to Ask for Reviews Without Being Awkward. The operational and cultural piece that ties the systems from Parts 2-7 into how your team actually executes day to day.

Yes - more than ever. Google Business Profile is the primary source for Google AI Overviews, which now appear in 68 percent of local searches. Strong Google reviews remain the foundation of contractor visibility. AI-era optimization extends beyond Google to capture ChatGPT, Gemini, and Perplexity audiences - it does not replace Google.

Run a Share of Model audit (covered in Cat 10 Part 8). Build a list of 25 queries your customers might ask an AI - near-me queries, best-in-city queries, problem queries, pricing queries. Run each query manually in ChatGPT, Gemini, Perplexity, and Google AI Mode. Log whether you appear, who else appears, and which sources got cited. Most local contractors start under 10 percent Share of Model.

You can prioritize, but not specialize. ChatGPT holds 60 percent of AI search market share, so it is the highest-volume target. But Gemini is growing 12 percent quarter-over-quarter, and Apple Siri/World Knowledge Answers will reshape iOS user behavior. Single-platform AI optimization is fragile. Multi-platform review presence is the durable strategy.

Ask guiding questions in your review request, not scripted content. What did our team do that stood out? gives the customer something to think about without dictating what they say. Scripted templates that customers copy-paste violate platform TOS and produce reviews AI tools detect as inauthentic. Guiding questions are permitted and produce better content.

Marginally. The big jump is below versus above 4.5. Within the 4.5-5.0 range, rating differences matter less than other signals. A 4.6-star contractor with 300 reviews and active recency easily outranks a 4.9-star contractor with 50 reviews and stale activity. Optimize for the floor (4.5+) then prioritize volume, recency, sentiment, and specificity.

AI tools update their recommendation logic on different cadences. Google AI Overviews refresh nearly real-time. ChatGPT, Gemini, and Perplexity refresh on their own internal schedules ranging from weeks to months. Plan for 90-180 days from the start of dedicated AI optimization to measurable phone call impact. The compounding effect kicks in after that.

Most contractors think their review profile is "fine." Then we benchmark it against the local competitors who are taking the AI recommendation slots. Our free audit checks volume, recency, rating, response rate, and platform coverage — and shows you the highest-leverage moves to get to 4.7+ stars and 200+ reviews this year.

Continue the Reviews & Reputation series with Part 6 of 8.

Continue the Reviews & Reputation series with Part 8 of 8.